You ask an AI assistant what year something happened. It says 1987. You ask again. It says 1989. You ask a third time. It says it’s uncertain. This is disorienting — and it happens for specific technical reasons that most explanations of AI don’t bother to make clear.

Here’s the honest, plain-language explanation.

The “Temperature” Setting Explained

AI language models have a parameter called “temperature” that controls how much randomness is introduced into their outputs. At temperature 0, a model would give the same answer every time to the same question — it would always choose the highest-probability next word. At higher temperatures, the model introduces randomness — sometimes choosing the second or third most likely word instead of the most likely one.

This is intentional design. Temperature 0 produces answers that are consistent but robotic and repetitive. Higher temperature produces more varied, creative, and natural-sounding responses — at the cost of consistency. Most consumer AI applications run at temperatures between 0.5 and 1.0, which means deliberate variability is built in.

How AI Actually Generates Words

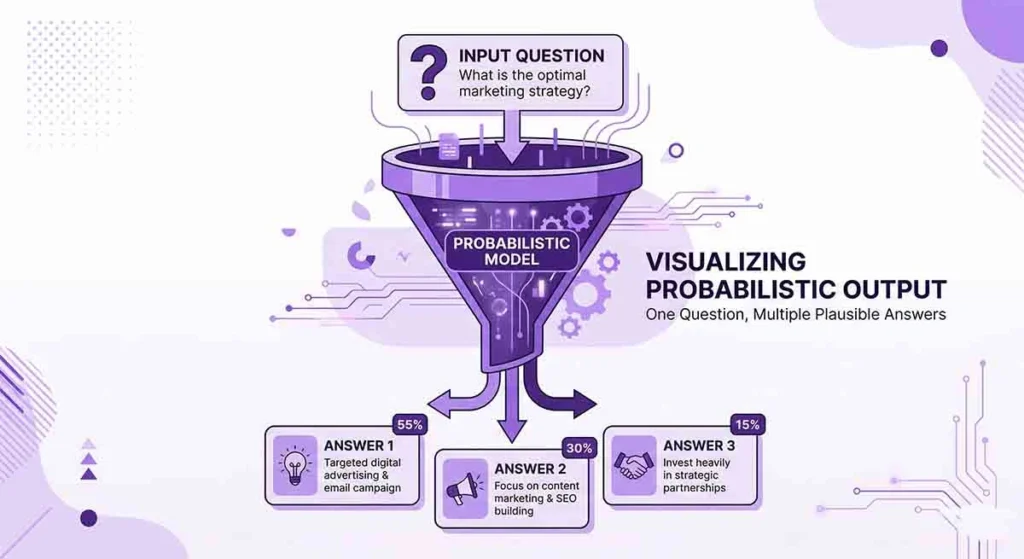

This is the most important thing to understand about why AI varies: AI language models don’t look up answers. They predict the next word based on probability distributions learned from training data. Every word generated is a probabilistic selection from a distribution of possible next words, conditioned on everything that came before.

For any given input, there are many possible “correct” continuations — not just one. The model doesn’t have a single stored answer to your question. It generates an answer each time, fresh, using its learned parameters. Each generation is a new sampling event from those probability distributions — which is why the output varies.

Why AI Is Not a Database

The most common misconception about AI is that it stores and retrieves facts like a database. It doesn’t. A database stores “Paris is the capital of France” as an explicit fact that is retrieved and returned identically every time. An AI language model has learned from millions of documents that contain information about Paris and France, and has encoded patterns that make it likely to generate accurate information about this — but it’s not retrieving a stored record. It’s predicting a plausible completion.

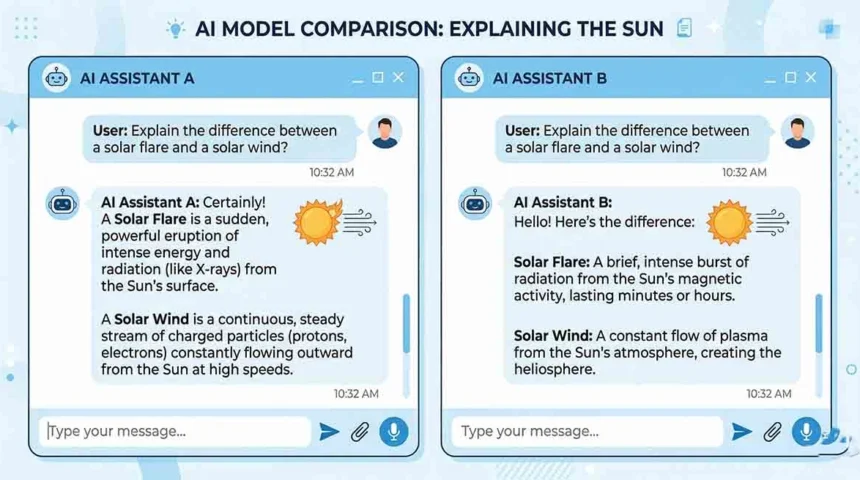

This distinction matters enormously for how you should use AI. For factual recall of specific data (dates, numbers, proper nouns), AI is less reliable than a database or a well-indexed search engine precisely because retrieval isn’t what it’s doing. For synthesis, explanation, reasoning, and language tasks, AI is often extraordinary — because those tasks benefit from the probabilistic, pattern-matching process that also causes factual variability.

When AI Answers Are More vs. Less Consistent

More consistent: Questions with very strong signal in training data (widely documented facts, common knowledge), mathematical operations, code syntax, well-established procedures.

Less consistent: Questions about specific numbers or dates (especially less-famous ones), nuanced opinion questions, questions about recent events near or after training cutoff, and any question where the training data itself contains multiple contradictory claims.

Practical Implications for How You Use AI

- Verify specific facts independently. For specific dates, numbers, names, or citations, treat AI output as a starting point for verification — not a final source.

- Use AI for reasoning and synthesis, not just fact retrieval. These are its actual strengths. Asking it to explain, compare, summarize, or generate is more reliably useful than asking it to recall specific data.

- Ask for confidence levels explicitly. “How confident are you in this?” prompts AI to flag uncertainty it might otherwise present with false assurance.

- If consistency matters, lower the temperature via settings if available, or provide more context in your prompt to constrain the output space.

Leave a Reply